For most of computing history, packaging was an afterthought. Once a chip came off the wafer, it had to be enclosed in something that would protect it and connect it to a circuit board. But until recently, what mattered most was the silicon. Investors, journalists and customers all paid attention to the same thing: how many transistors you could fit on a die, and how fast they could switch.

That has changed in the last few years, and it has changed more than most people realise. The hardest constraint on the AI buildout in 2026 is not GPU design and not wafer fabrication. It is the step that comes after both — taking a finished GPU die, attaching several stacks of high-bandwidth memory to it, mounting the assembly onto a silicon interposer, and turning it into something that can actually be sold. Only a handful of facilities in the world can do this at the volumes Nvidia and its peers now require, and almost all of them are running flat out.

Contents

1. Why packaging is suddenly the story

2. Seven techniques, what each is for

3. The players, by what they actually sell

4. Why HBM is not a packaging fight

5. Why 2.5D is a foundry fight in disguise

6. The geography of the chokepoint

7. What changes by 2028

8. The takeaway

Why packaging is suddenly the story

The reason packaging has moved to the centre of the conversation is that progress on the silicon itself has become much harder. For four decades, every generation of chips ran faster than the last because the transistors got smaller. Around 2018 that pattern started to break down. The transistors kept shrinking, but the cost of each new manufacturing node rose faster than the performance gain coming out of it. The industry was running into physics, and the economics of pure miniaturisation stopped working.

What replaced it was a different idea: if you cannot make one die faster, build a system out of several dies and connect them very tightly. Place a GPU compute die next to a few stacks of memory and the result behaves like one chip but with bandwidth and capacity that no monolithic die could match. This is the basic concept behind what the industry calls advanced packaging, and it explains why an Nvidia H200 has roughly five times the memory bandwidth of an H100 even though both are built on the same transistor process. The transistors did not get faster. The dies got closer together.

Once you accept that proximity has become as important as miniaturisation, a lot of strategic moves in the industry start to make sense. SK hynix’s $3.87 billion plant in Indiana, TSMC’s outsourcing of orders to Amkor, the CHIPS Act’s emphasis on packaging onshoring — these are all fights over a few square metres of factory floor where dies are placed close to each other.

Seven techniques, what each is for

Before getting to the companies, it helps to understand the techniques. There are roughly seven types of packaging that matter commercially, and they are not really substitutes for each other — each one solves a different engineering problem and ends up dominating a particular kind of chip.

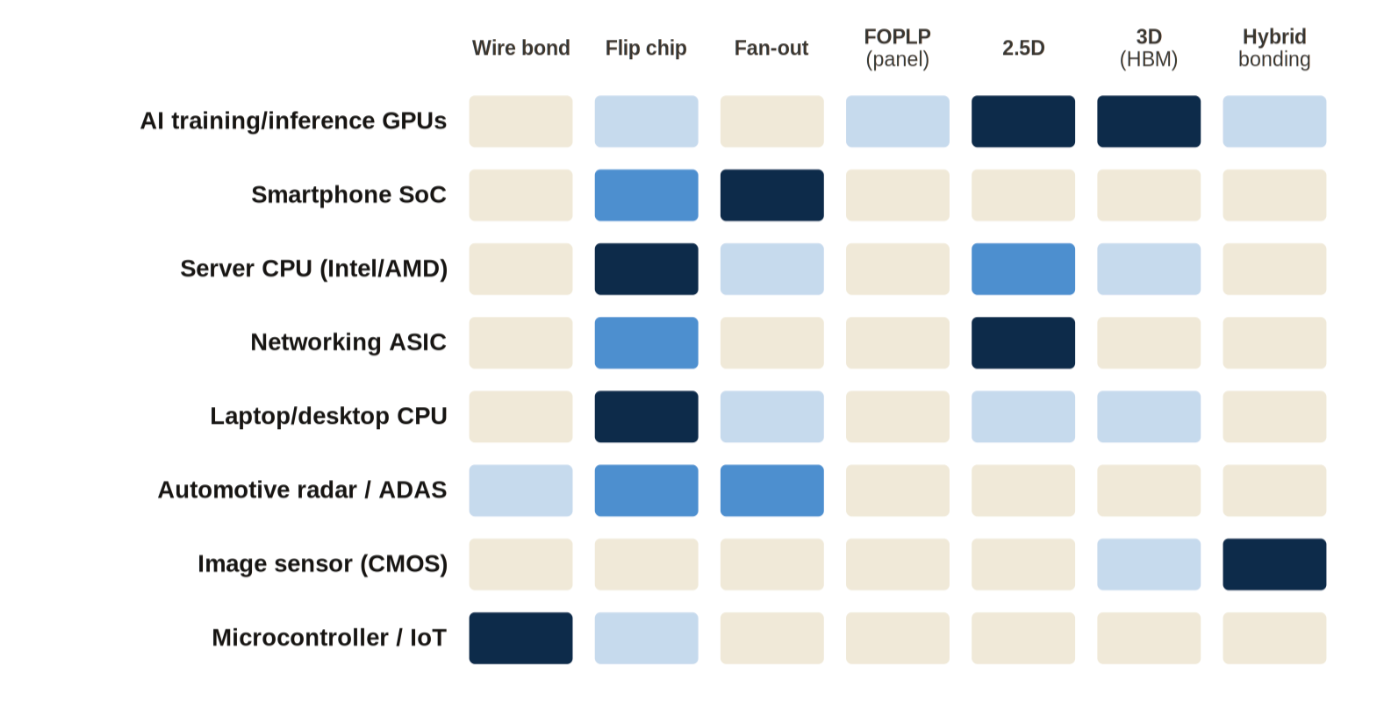

Figure 1. Which packaging type fits which application. Darker cells indicate stronger fit.

The two oldest and cheapest techniques — wire bond and flip chip — still account for the vast majority of chips by volume. Wire bond goes into anything that does not need to be especially fast: microcontrollers, automotive engine ECUs, the chips inside appliances. Flip chip is the workhorse for most server CPUs and almost every smartphone, often combined with fan-out packaging for the mobile parts. These businesses are not glamorous, but tens of billions of these chips ship every year and the supply chains around them are stable.

What matters strategically sits in the top-right corner of the grid. 2.5D packaging — placing multiple dies side by side on a shared silicon interposer — and 3D HBM — stacking memory dies vertically and bonding them through the silicon — are the only two techniques used across AI accelerators, high-end networking ASICs, and the latest server CPUs. They are the techniques that take a GPU and turn it into a usable system, and the companies that dominate them are unusually concentrated. TSMC accounts for almost all of the leading-edge 2.5D. SK hynix accounts for the majority of HBM.

The players, by what they actually sell

The competitive landscape is easier to read once we look at where each player’s revenue actually comes from. Several of the companies that get grouped together in news coverage barely compete with one another at all.

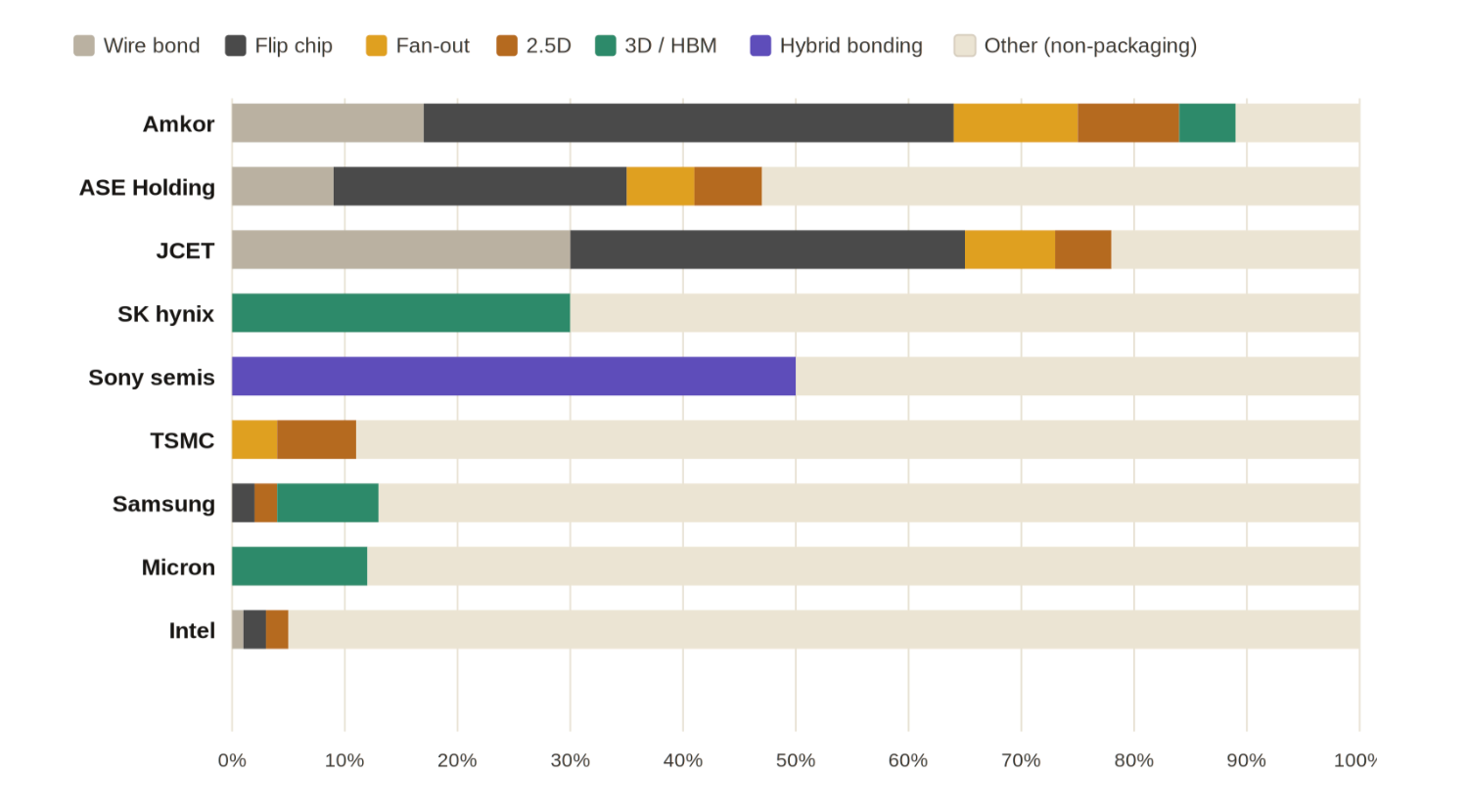

Figure 2. Each player’s revenue mix by packaging type. Packaging elements do not reach 100% because most companies also do non-packaging work.

The OSATs — Amkor, ASE, JCET — are pure packaging companies. They do not design chips and they do not run wafer fabs; almost everything they earn comes from the coloured portion of their bar. ASE alone packages roughly a third of all chips made on Earth, mostly through high-volume mainstream techniques. Their economics are stable but the margins are thin.

The memory companies show up very differently. SK hynix, Micron and Samsung each have a thin slice of green on the chart, representing HBM, and a much larger "other" segment that is mostly standard DRAM and NAND flash. The slice looks small, but it is misleading at first glance, because the rest of the memory business — server DRAM, mobile DRAM, NAND for SSDs — is a commodity market that grows in the single digits. HBM grows at more than 40% a year and earns five to six times the gross margin of regular DRAM. Micron has told investors it expects roughly $8 billion of HBM revenue in 2026, all of it sold before the year started. Almost all of the growth in memory revenue right now is coming from this small, packaging-related slice.

TSMC is the most interesting case on the chart. Only about 11% of its revenue comes from packaging today, but that small share is what protects the rest of the business. The packaging operation is the reason TSMC’s customers find it almost impossible to switch to anyone else, even when capacity is tight. Why that is the case is worth a section of its own, and the next few visuals make it clearer.

The same data tells a different story when you flip it around and ask, for each packaging type, who actually has the global market share.

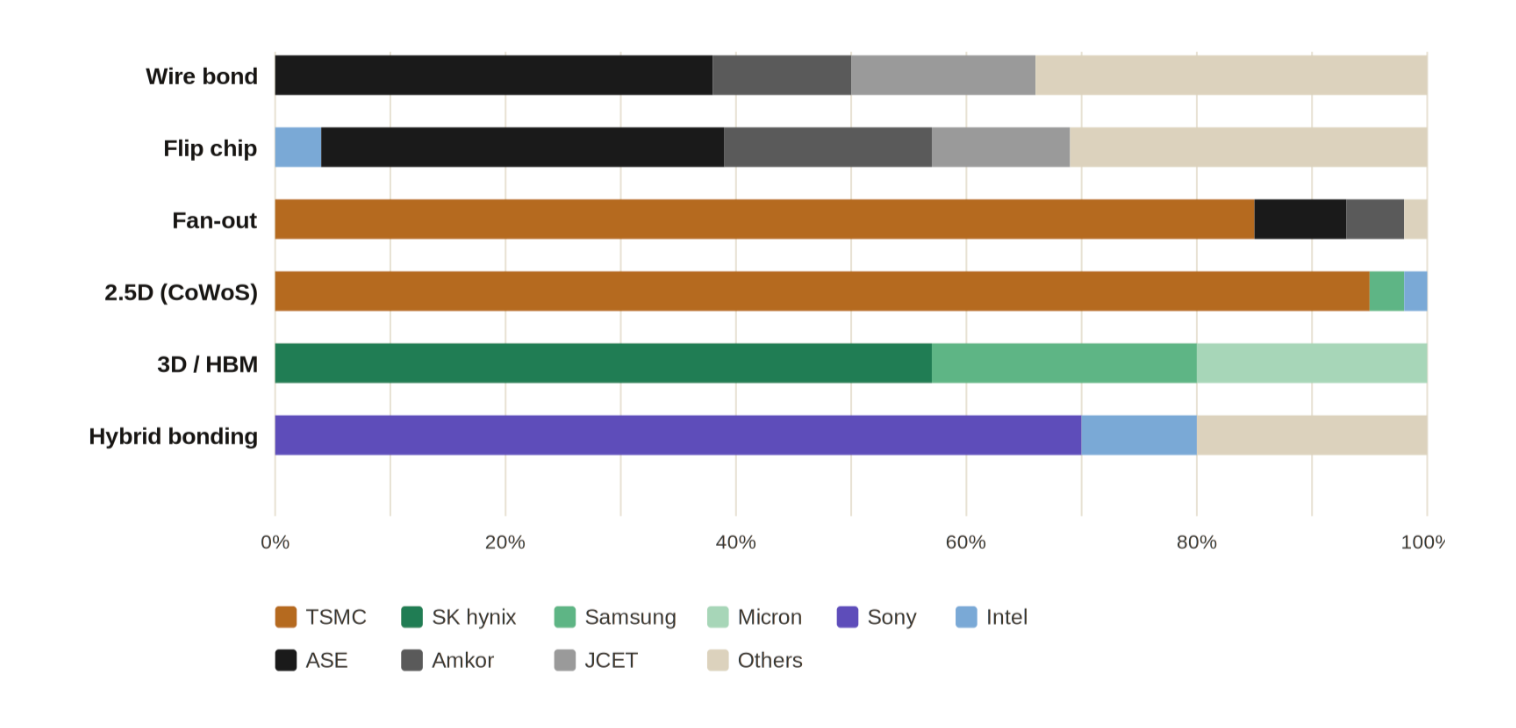

Figure 3. Within each packaging category, who has what share of global volume. Concentration rises sharply as you move down the stack.

The pattern in this chart is the most important thing in this article. The market gets dramatically more concentrated as you move down the stack. Wire bond is genuinely competitive — half a dozen companies hold meaningful shares. Flip chip is tighter but still has multiple credible suppliers. By the time we reach 2.5D CoWoS, TSMC alone is responsible for roughly 95% of global capacity at the leading edge. HBM is split between only three companies in the world, and SK hynix has more than half of that. Hybrid bonding is even more concentrated, divided mainly between Sony for image sensors and SK hynix for next-generation memory. The competitive part of the packaging market is the part AI does not really need; the part AI does need is supplied, in each technique, by no more than two or three firms globally.

Why HBM is not a packaging fight

A common confusion in coverage of AI hardware is the assumption that SK hynix and TSMC compete with each other, because both names appear in any explanation of how an Nvidia chip is built. They actually sit in completely different parts of the supply chain and hand the work off to one another. Untangling this is one of the more useful unlocks for understanding the industry.

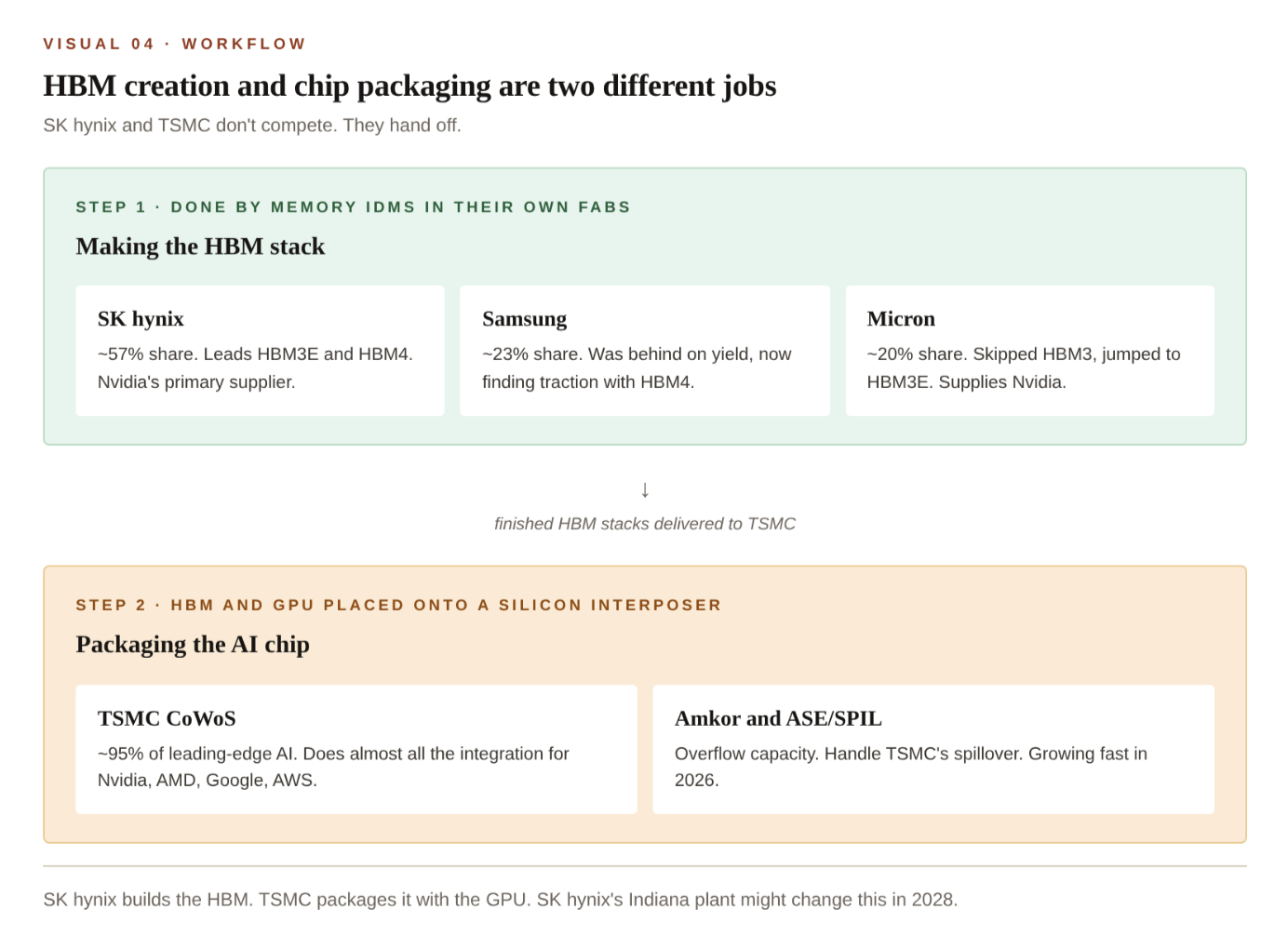

Figure 4. HBM creation and chip packaging are two different jobs. The memory IDMs build the HBM stack; TSMC then packages it with the GPU.

The first step is a memory operation. Twelve or sixteen DRAM dies are stacked vertically, with thousands of vertical wires running through them, and the whole stack is sealed into a single component called an HBM cube. This work happens inside the memory companies’ own fabs — primarily SK hynix’s facilities in Cheongju and Icheon, Samsung’s plant in Pyeongtaek, and Micron’s site in Taiwan. The second step is a packaging operation. The finished HBM cubes are shipped to TSMC, where they are placed onto a silicon interposer next to the GPU compute die that TSMC has separately fabricated for Nvidia, and the assembly is bonded together to form the final product.

Today these two steps are not done under the same corporate roof anywhere in the world. That is why SK hynix and TSMC do not really threaten each other in the way Nvidia and AMD do. Their relationship is sequential rather than competitive — one supplies a critical component to the other, and both depend on the same end customers buying the finished chip.

So why doesn’t SK hynix package its own HBM together with the GPU and sell the whole assembly directly? Because doing that requires being able to fabricate the GPU first, and SK hynix does not run a logic foundry — it cannot make Nvidia’s compute die. The new $3.87 billion plant in West Lafayette, Indiana, which broke ground in April 2026 and is targeted to begin production in late 2028, is the first serious attempt to work around this. Rather than fabricate the GPU itself, SK hynix is hoping to convince Nvidia to ship finished GPU wafers to Indiana, where SK hynix would handle the 2.5D integration with its own HBM. Whether Nvidia is willing to do that, given the trust and logistics implications, is one of the more important open questions in advanced packaging today.

Why 2.5D is a foundry fight in disguise

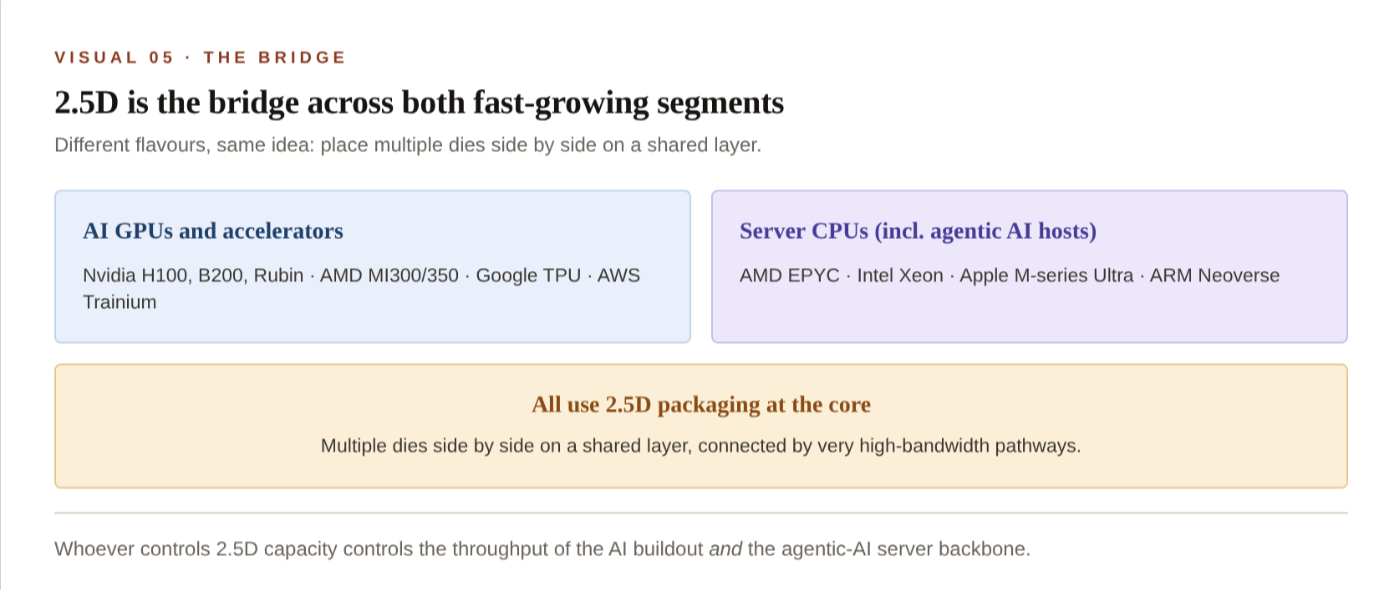

Among the seven techniques, 2.5D is unusual because it is the one being used by both of the fast-growing segments of the chip industry: AI accelerators and the latest generation of high-end server CPUs. That makes 2.5D capacity disproportionately important for everything else.

Figure 5. 2.5D packaging is the bridge across both fast-growing segments — AI GPUs and modern server CPUs all use it at the core.

The interesting question is why TSMC’s position in 2.5D has been so difficult for anyone else to challenge. On the face of it, the technology is not exclusive — ASE has a 2.5D line, Amkor has one, Intel and Samsung do their own packaging in-house, and there are several smaller players in Taiwan and Korea. But TSMC still ends up with around 95% of leading-edge 2.5D volume year after year. The explanation has more to do with how chips actually get built than with any specific piece of equipment. What customers are really buying when they choose CoWoS is not a packaging service in the narrow sense; it is end-to-end yield management for an extremely expensive piece of silicon.

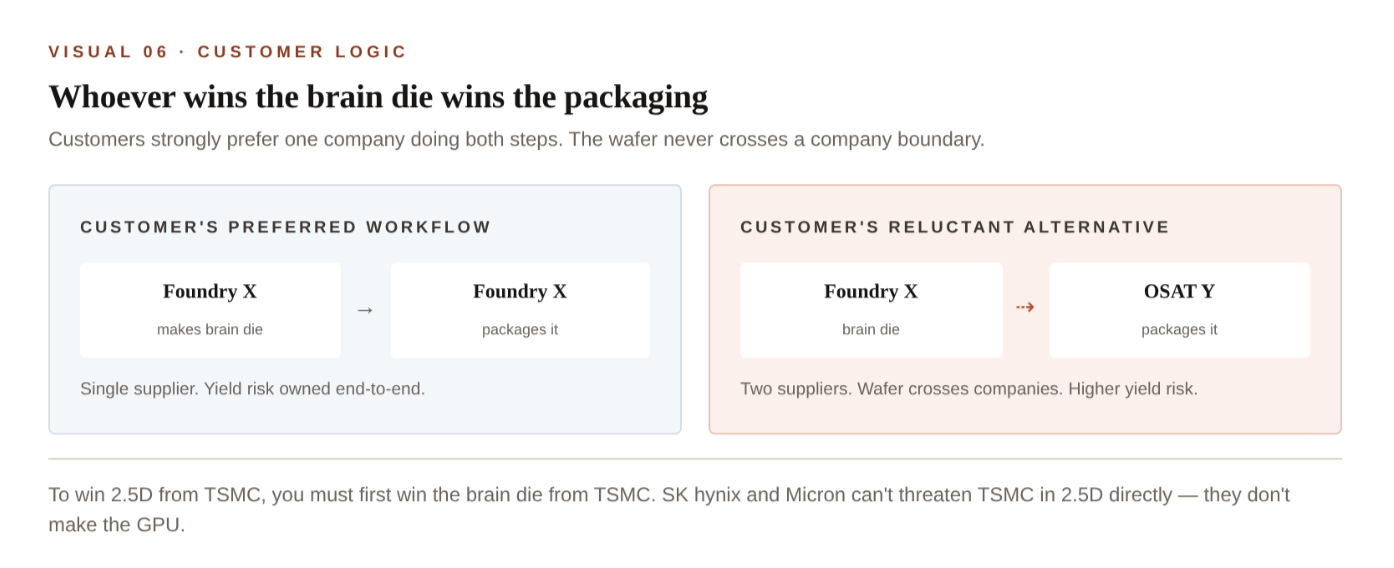

Figure 6. Customers strongly prefer one company to handle both the brain die and the packaging, because the wafer never crosses a corporate boundary and yield risk stays in one place.

When TSMC fabricates an Nvidia GPU on one of its wafer fabs and then packages it on its own CoWoS line a few buildings away, the wafer never crosses a corporate boundary. If yields fall, the failure analysis stays inside TSMC, where the engineering team can trace defects back through their own data. Customers value this enormously, particularly at the price points involved — a single advanced GPU package can be worth more than $40,000 by the time it is finished. The alternative is to fabricate the wafer at TSMC and then ship it to Amkor or ASE for packaging, which Nvidia, AMD and Broadcom do as overflow. It works, but it introduces a handoff between two companies, and when defects show up it becomes harder to know whether the fab or the packaging line is responsible. Customers accept this arrangement when TSMC’s primary line is full, which it has been continuously for the last two years, but they do not actively prefer it.

The conclusion is awkward for anyone hoping to break TSMC’s grip on 2.5D from the outside. To compete with TSMC at the packaging level, a company effectively has to compete with TSMC at the foundry level too, because customers want both halves of the job done by the same supplier. The list of companies that could credibly fabricate a leading-edge GPU is short: Intel, Samsung, eventually Rapidus in Japan, and SMIC in China for older nodes. Notably absent from that list are SK hynix, Micron, Amkor, ASE, and all the fabless players — Nvidia, AMD, Google, AWS — that buy from TSMC today. They are large and important customers, but none of them can fabricate the brain die themselves, which is why none of them is in a position to take 2.5D away from TSMC by force.

The geography of the chokepoint

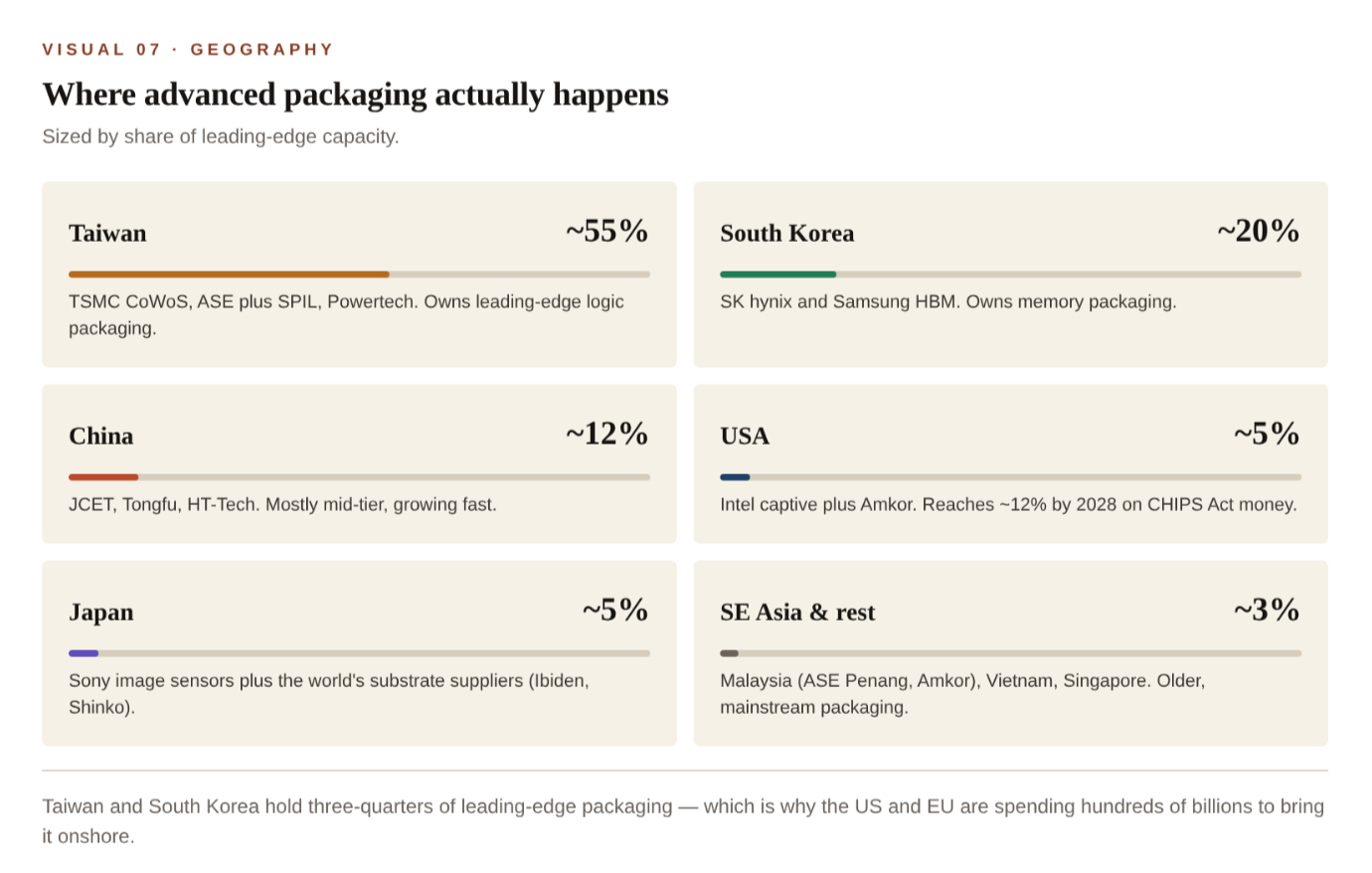

Mapping all of this geographically produces what is probably the most concentrated industrial chokepoint in the modern economy. Two countries handle the overwhelming majority of the work that turns silicon into AI systems.

Figure 7. Where the world’s advanced packaging actually happens, sized by share of leading-edge capacity.

This concentration is the real reason behind the United States’ CHIPS Act spending and the European Union’s parallel push on semiconductor sovereignty. The justification is not primarily about cost; American packaging is unlikely to ever match Malaysian or Vietnamese packaging on a per-unit basis. The point is to reduce the dependency on a single geography for a part of the supply chain that has no realistic substitute. There is no second source for TSMC’s CoWoS process today, and there are no spare HBM production lines sitting idle anywhere in the world. A serious disruption to either Taiwan or South Korea — natural, political, or otherwise — would slow the global production of AI hardware within a matter of weeks.

Where the numbers stand in 2026

• ~95% — TSMC’s share of leading-edge 2.5D / CoWoS capacity worldwide.

• ~60% — Of 2026 CoWoS capacity already booked by Nvidia alone.

• ~53% — SK hynix’s share of HBM revenue in Q3 2025.

• ~3 years — HBM order backlog against today’s supply. Sold out through 2026 across all suppliers.

• ~$8B — Micron’s projected HBM revenue run-rate for 2026.

• ~75% — Of leading-edge packaging concentrated in Taiwan plus South Korea combined.

What changes by 2028

Three structural shifts are already underway that will change how this map looks by the end of the decade.

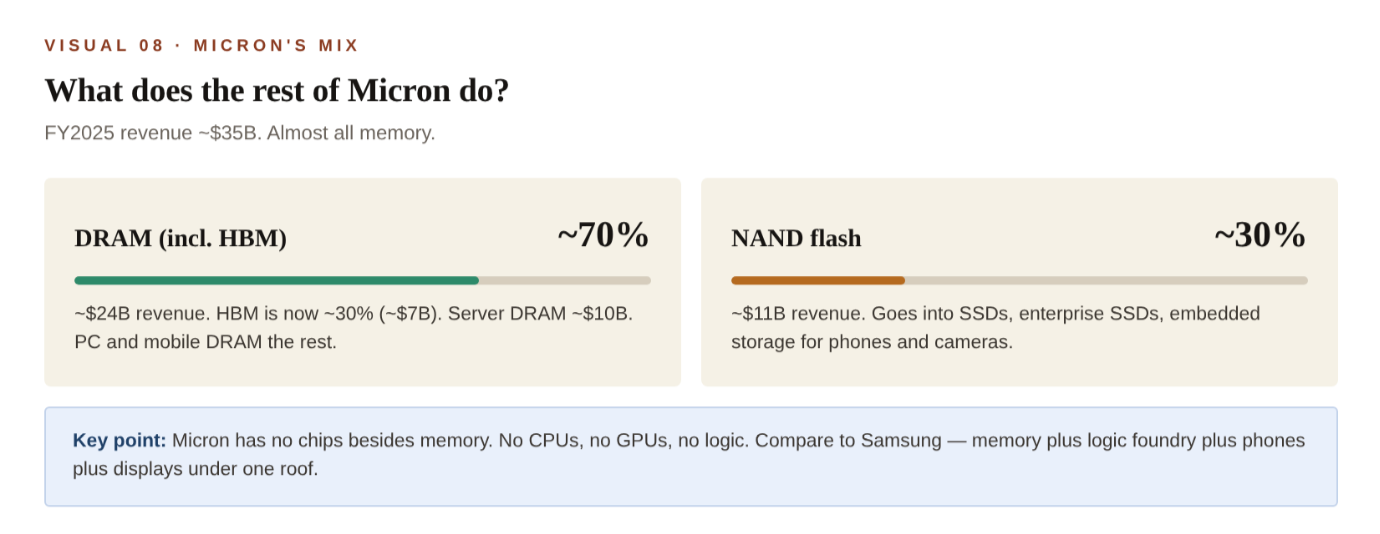

Micron becomes a pure data-centre supplier

In December 2025, Micron announced that it would exit the Crucial-branded consumer DRAM and SSD business by February 2026, ending a 29-year retail presence. The reasoning was straightforward, even if the company did not put it in these terms: with HBM in critically short supply and selling at premium margins, every wafer of capacity Micron has is more valuable when allocated to data-centre customers than when sold into consumer channels. The exit is the clearest signal yet that what was historically a broad memory company is now reorganising itself around AI infrastructure.

Figure 8. Micron’s revenue mix is essentially all memory — about 70% DRAM (with HBM the fastest-growing slice) and 30% NAND flash.

SK hynix attempts the first non-foundry 2.5D line

The Indiana plant is significant beyond its capacity numbers because of what it represents. When it begins production in late 2028, it will be the first mass-production 2.5D packaging line in the world that is operated by a company that is not also a logic foundry. If SK hynix can persuade Nvidia and AMD to send finished GPU wafers to Indiana, it will be able to offer something none of its competitors can: a single supplier for both the HBM stacks and the 2.5D integration. Whether customers will accept the additional handoff that this requires is uncertain, but the project is moving forward partly because the alternative — leaving all advanced packaging concentrated in Taiwan — has become politically uncomfortable for the US government.

The geographic concentration eases, but only slightly

The United States’ share of leading-edge packaging is on track to roughly double by 2028, from about 5% today to around 12%, driven by CHIPS Act funding flowing into TSMC’s Arizona campus, Intel’s expanding packaging operations, Amkor’s new $7 billion Arizona facility, and the SK hynix Indiana plant. South Korea will also gain share through expansions at Samsung and SK hynix. China’s domestic packaging industry, particularly JCET and Tongfu, continues to grow rapidly with state support, though it remains concentrated in mid-tier techniques. Taiwan will still be the centre of gravity in 2028, but the world will have a few more nodes of supply-chain resilience than it does today. None of this rebalancing happens quickly enough to relieve the 2026 shortage.

The takeaway

The dominant framing of AI as a software phenomenon sitting on top of an Nvidia hardware story understates how much of the actual constraint sits further down the stack. The companies that are best positioned to earn outsized economic rents from the AI buildout are not necessarily the ones designing the chips. They are the ones that have the physical capacity to assemble them — TSMC at the system level, SK hynix at the memory level — along with a small group of equipment makers selling the tools that make any of this possible. Two countries hold around three-quarters of the relevant capacity. Three companies hold almost all of HBM. One company holds almost all of leading-edge 2.5D. For the next two years at least, this small group sets the practical speed limit on how quickly the AI economy can grow.

Sources: Counterpoint Research, TrendForce, Yole Group, JPMorgan, SemiWiki, and primary disclosures from TSMC, SK hynix, Micron, Samsung, Amkor and ASE Holdings (2025–2026 fiscal year). Market share figures fluctuate quarter to quarter; the strategic picture does not. Updated May 2026.