For most of the past 50 years, the way to make a computer chip faster was simple. Shrink the transistors. Fit more of them on a single piece of silicon. Repeat every couple of years.

That formula carried the industry from the era of room-sized mainframes to the smartphone in your pocket. By around 2018 or 2020, though, it stopped working as well as it used to. Transistors had become so small they were measured in atoms, and shrinking them further turned into a brutal engineering problem.

So chipmakers shifted strategy. If a single piece of silicon cannot get much faster on its own, what if you cleverly combined several pieces of silicon inside one package, and made them work together?

That idea is called advanced packaging. It is the reason modern AI chips exist, and over the past five years it has quietly become one of the most valuable bottlenecks in the entire technology industry. This is a plain-English guide to what it actually is.

1. What is a chip, really?

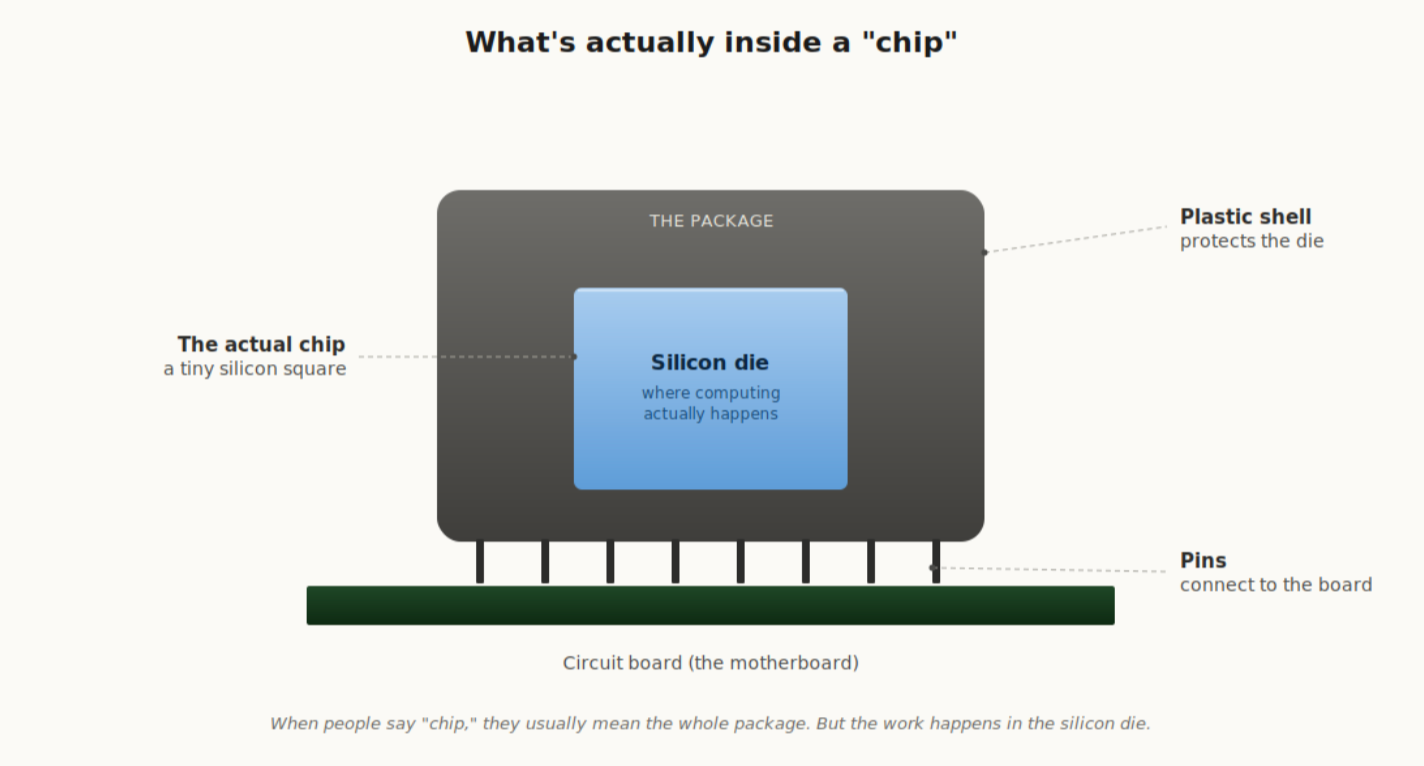

When people say "chip," they usually mean two things at once.

- The silicon die. The tiny gray square where computing actually happens.

- The package. Everything around the die that protects it and connects it to the device it sits in.

For decades the package was an afterthought. Plastic shell, metal pins, done. The silicon die was the star. Then around 2020 things changed, and the packaging part suddenly became the most interesting question in the whole industry.

Figure 1. When people say "chip," they usually mean the whole package. The actual computing happens in the silicon die inside it.

2. Why a single die is no longer enough

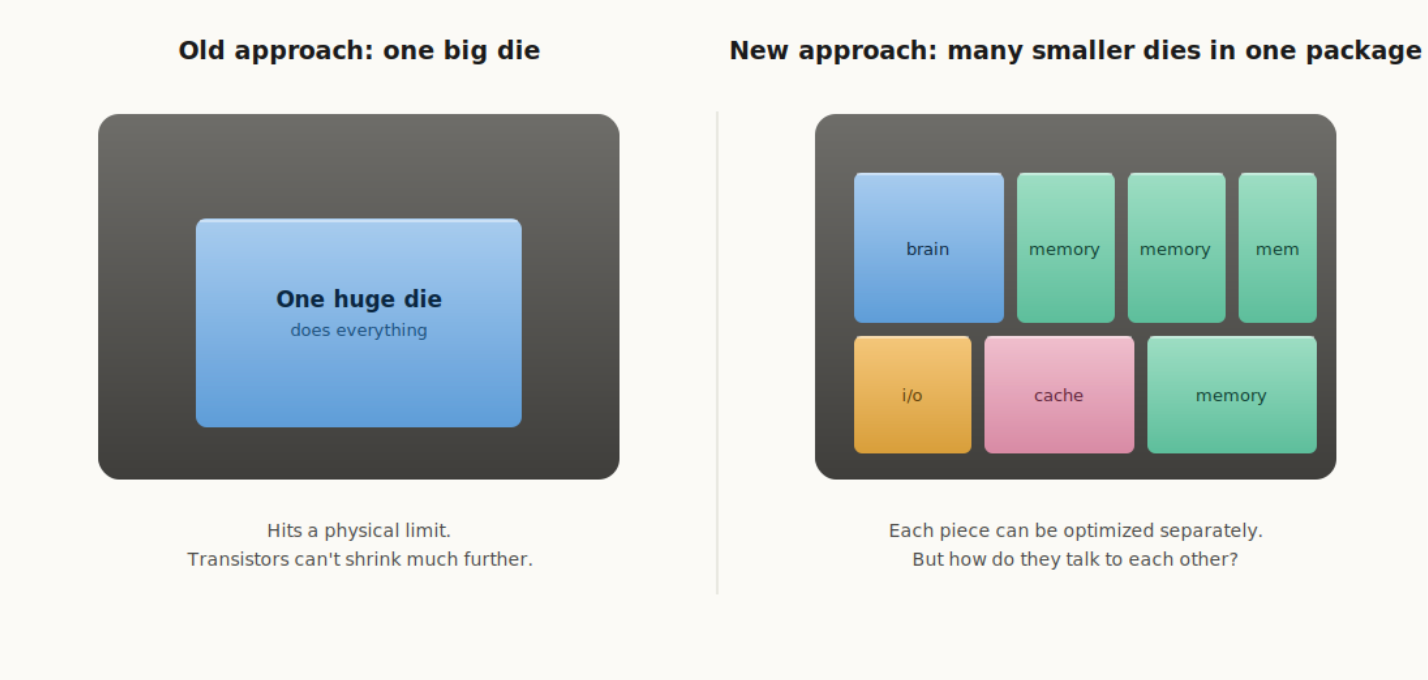

For decades, the recipe for faster chips was to shrink transistors on a single die. Smaller transistors meant more of them in the same area, which meant a faster chip. The recipe was reliable and predictable.

By the late 2010s, transistors had reached the point where they were just a few atoms wide. You cannot really get much smaller than that without running into the laws of physics. Each new generation of process technology was costing more and delivering less than the one before it.

Engineers responded by asking a different question. Instead of trying to squeeze everything onto one die, what if you used several smaller, specialized dies and made them work together inside the same package? A brain die over here, a memory die over there, an input/output die alongside them.

Figure 2. The shift in approach: from a single huge die to many specialized dies cooperating in one package.

This idea solves the shrinking problem, but it creates a new one. If you have several chips that need to cooperate, how do they actually talk to each other? That question turns out to be everything. The faster the dies can communicate, the faster the whole package runs.

3. The connection problem, in plain English

Think of a chip like a small city. Each die is a building. The buildings constantly send messages to each other, and the speed of those messages is what decides how fast the whole city operates.

If your home, office, and grocery store are far apart with terrible roads between them, life is slow. If they sit next to each other and there are high-speed pathways connecting them, life is fast.

Packaging is the road system. Different packaging techniques are different kinds of roads. There are four main ones in modern chips, and each solves a different real-estate problem. Let us look at them one at a time.

4. Flip chip, the workhorse

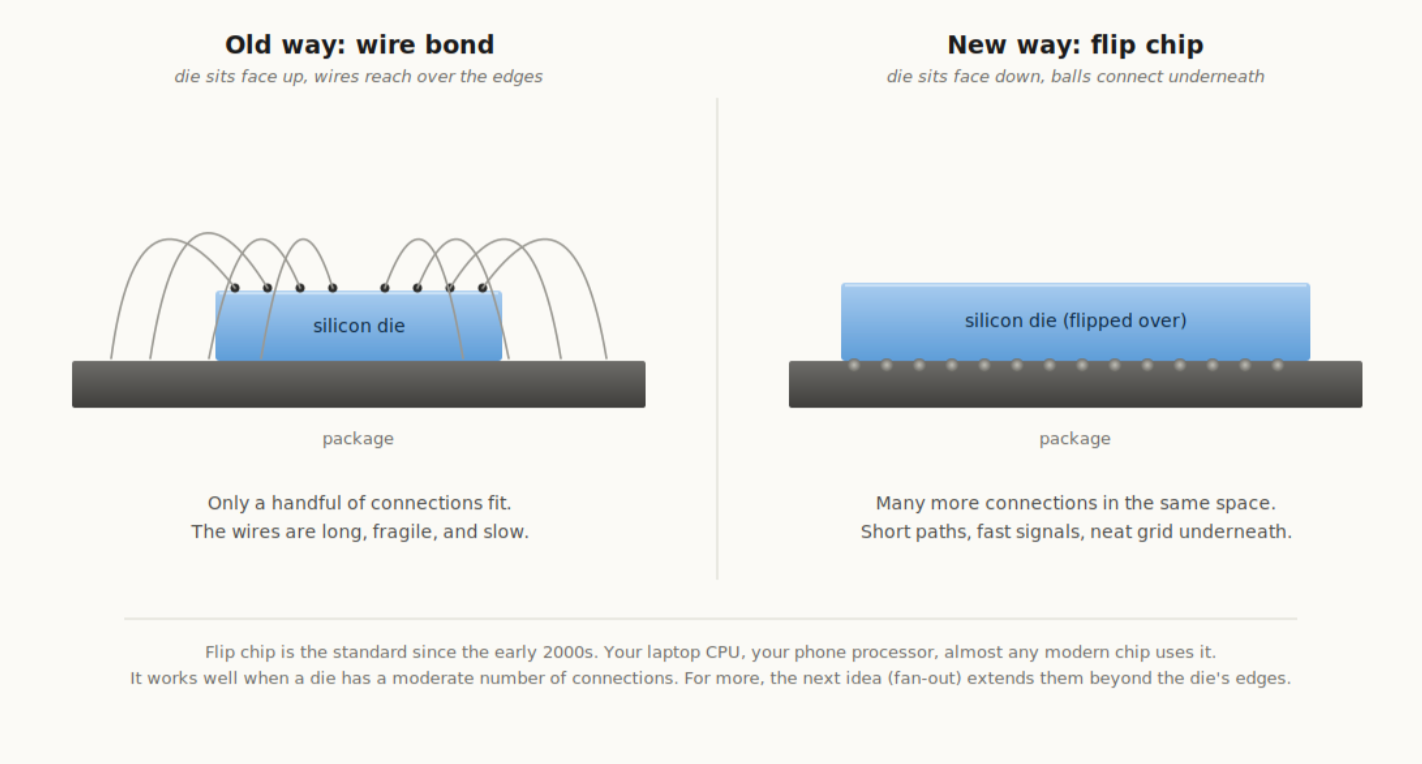

Imagine you have a piece of paper covered in tiny dots, and you need to connect each dot to something underneath. There are two ways to do it.

The old way is wire bond. Lay the paper flat with the dots facing up, and run a separate wire from each dot, over the edge of the paper, down to whatever sits below. If you have a hundred dots, that means a hundred wires, all crowding around the edges. It works, but you can only fit so many before the wires start tangling. The signal paths are also long, which makes them slow.

The new way is flip chips. Flip the paper over so the dots face down. Put a tiny ball of solder on each dot, press the paper onto the surface below, then heat it up so the solder melts and bonds. Now every dot is connected straight downward in a clean grid pattern, with much shorter paths and many more connections in the same area.

That is all flip chip is. The chip is flipped upside down so its connection points face the package, and the connections are made through tiny solder balls underneath.

Figure 3. Wire bond uses long, fragile wires reaching over the edges of the die. Flip chip uses a neat grid of solder balls underneath.

Flip chip is the standard, mature workhorse of the industry. It has been the default for laptop CPUs and phone processors since the early 2000s. Almost any modern chip uses flip chip somewhere in its construction.

It works well when the die has a moderate number of connection points. Modern chips, though, often need many more connection points than fit comfortably under a single die. That is where the next idea comes in.

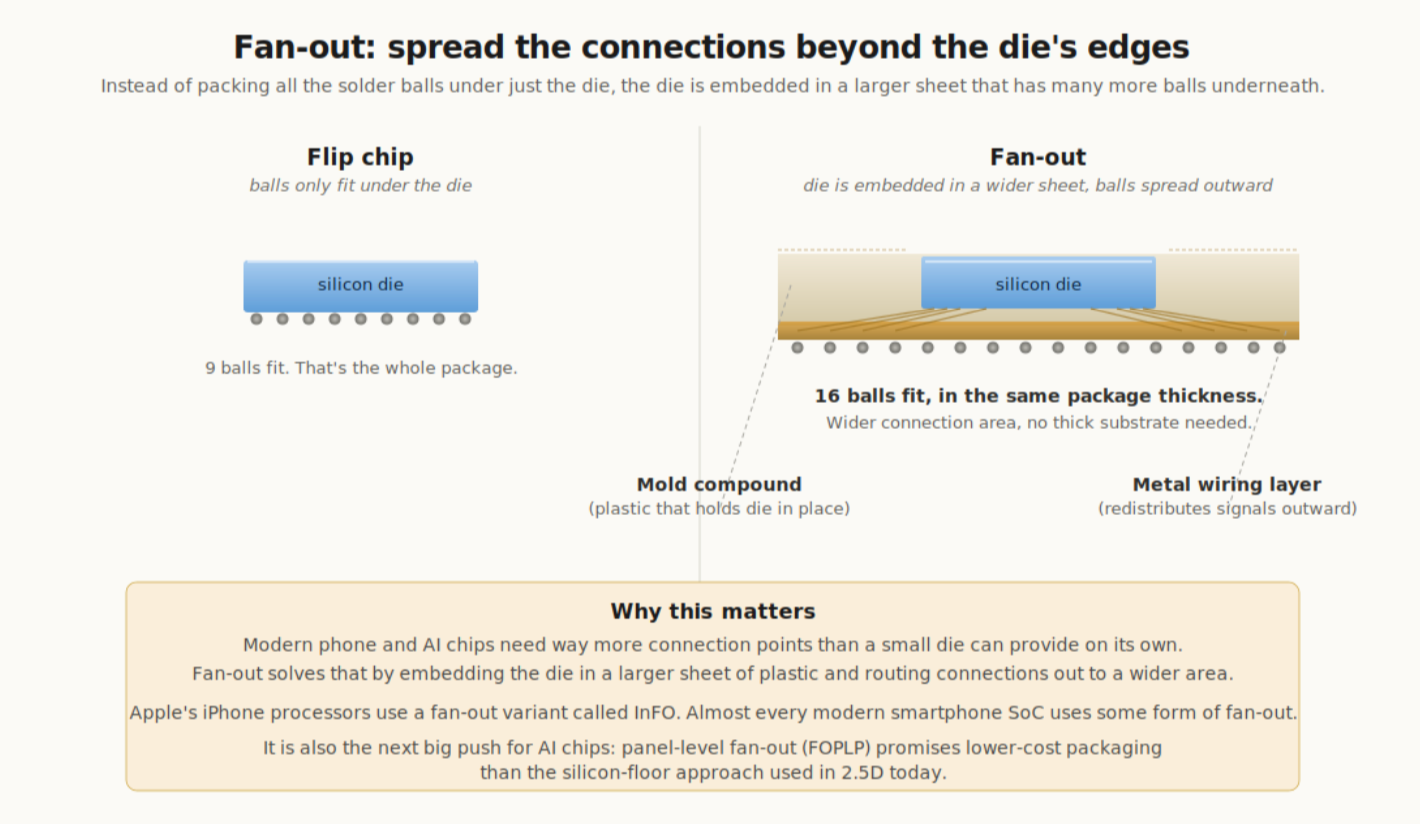

5. Fan-out, spread the connections beyond the die

Imagine a small die with thousands of connection points underneath, in flip chip style. The package ends up roughly the same size as the die. If you need more connection points than fit underneath, you are stuck.

Fan-out solves this with a clever trick. Take the bare die and embed it in a larger sheet of plastic, called mold compound, so the die sits flush in the middle of a wider surface. Now build a thin layer of metal wiring directly onto that sheet. The wiring routes signals outward, beyond the die's original edges, to a much wider grid of solder balls underneath.

The connections have been fanned out from the die's narrow footprint to a wider footprint. Hence the name.

Figure 4. Flip chip can only fit balls under the die itself. Fan-out embeds the die in a larger sheet and routes the signals outward, fitting many more balls in the same package thickness.

Fan-out has two big advantages. The package is thinner, because there is no thick organic substrate underneath. And the signals are faster, because the paths from die to ball are shorter and travel through cleaner, more uniform metal layers.

Apple is the most famous user. Every iPhone processor since the A10 has been packaged using a TSMC fan-out variant called InFO (Integrated Fan-Out). Almost every modern smartphone SoC uses some form of fan-out. Automotive radar chips and 5G RF modules also use it heavily, because the size and signal quality benefits matter a lot in those applications.

There is a newer version called fan-out panel-level packaging, or FOPLP, which uses big rectangular panels instead of round wafers. Same idea, larger surface area, lower cost per chip. Samsung is investing heavily in FOPLP because it could become the cheaper packaging option for high-volume AI accelerators in 2027 and beyond, undercutting the silicon-floor approach used in 2.5D.

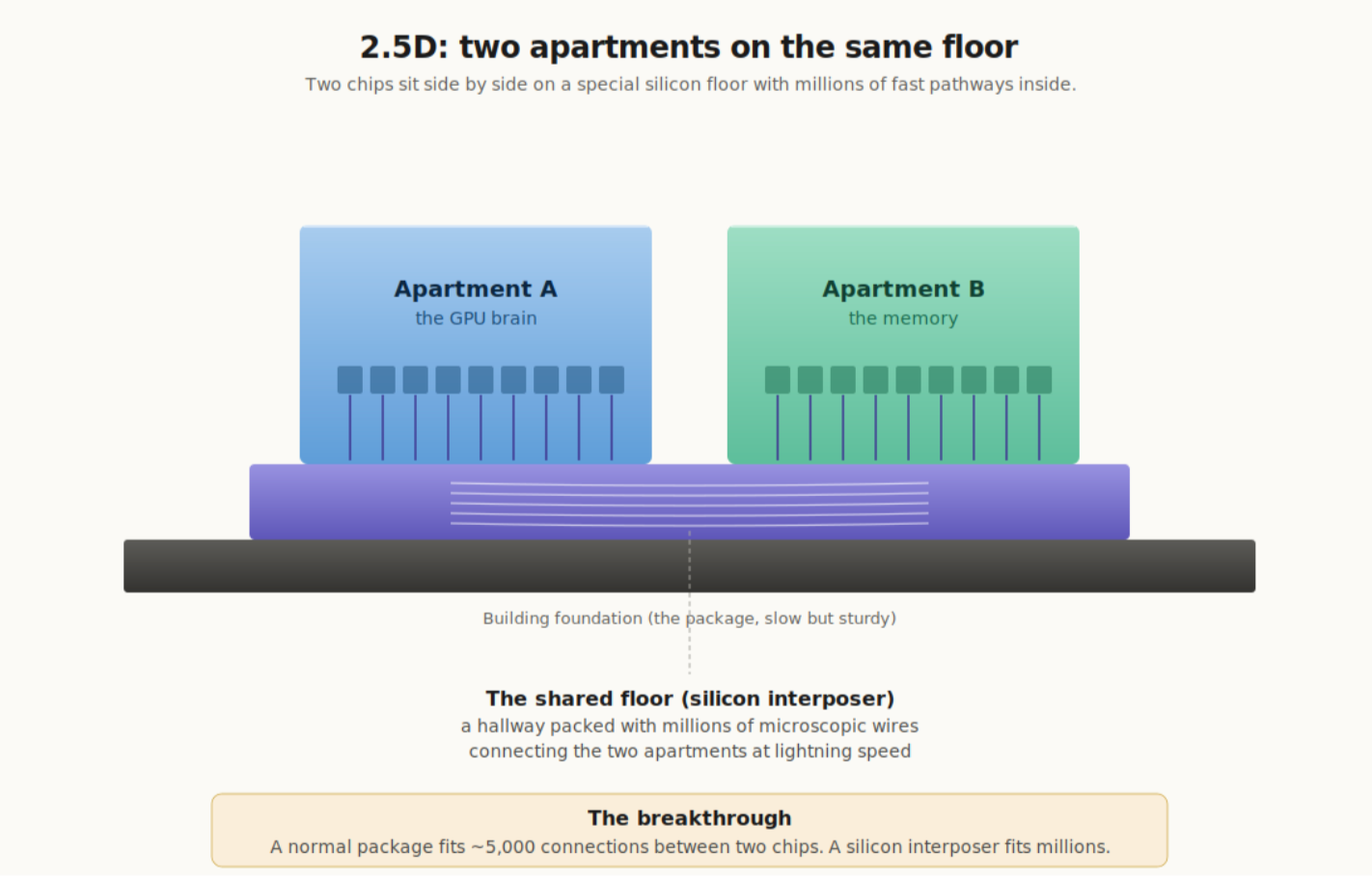

6. 2.5D, two apartments on the same floor

Picture two chips that need to talk to each other constantly. Say a brain chip (a GPU) and a memory chip. They have to swap huge amounts of data, instantly, all the time.

If they sit on a regular package, the connections between them have to travel through the package's thick plastic substrate. That is slow. Walking through mud slow.

The trick is to build a second, super-thin layer of silicon between the chips and the package. Both chips sit on this special layer like two apartments sharing the same floor. Engineers call this layer an interposer. Inside it, you can pack millions of microscopic wires, like a hallway stuffed with cables.

The reason this works is the material. The interposer is silicon, the same stuff as the chips themselves. Silicon can hold a far higher density of microscopic wires than plastic substrate ever could. So the two chips end up connected through millions of incredibly short, fast paths in the floor between them.

The name comes from the geometry. The chips are arranged flat next to each other (that is the 2D part), but they sit on a special silicon layer that adds a tiny bit of vertical depth. So the industry settled on calling it 2.5D. Engineers are not famous for naming things.

Figure 5. Two apartments on the same floor. The two chips share a silicon "floor" with millions of microscopic wires inside it, letting them swap data at incredible speeds.

This is the breakthrough that makes modern AI chips possible. A normal package can fit roughly 5,000 connections between two chips. A silicon interposer fits millions. Every Nvidia AI chip (H100, B100, B200, and the upcoming Rubin chips) is built this way. TSMC's version of 2.5D is called CoWoS, and right now the entire AI industry is bottlenecked by how fast TSMC can build these packages.

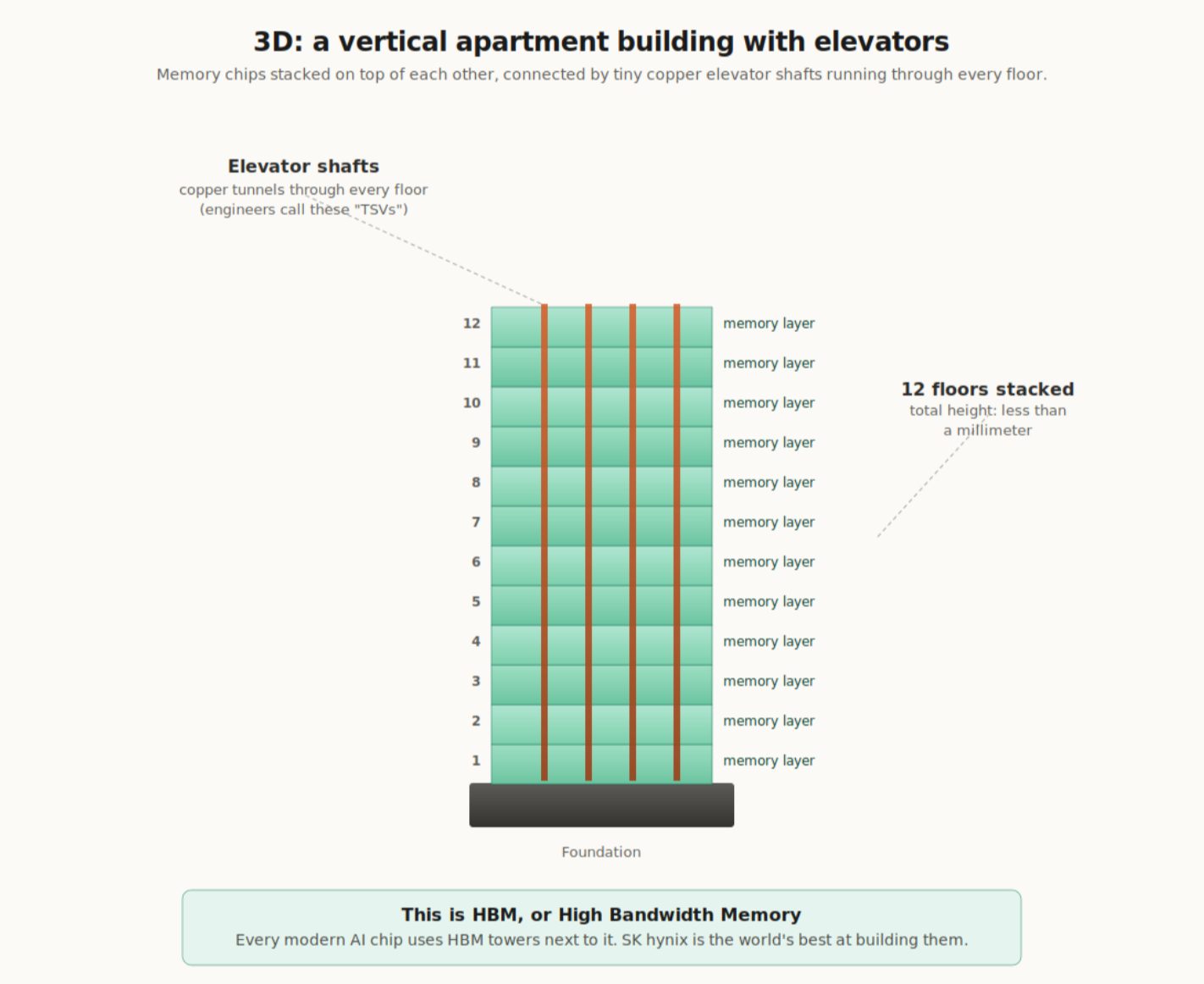

7. 3D, a vertical apartment building with elevators

Now imagine you do not just need two chips next to each other. You need a lot of memory close to the brain. Eight memory chips' worth, maybe more.

You could put eight memory chips in a row next to the brain, all on a 2.5D shared floor. But they would take up too much horizontal space, and the ones at the far end would be slower because their connections would be longer.

A better answer is to stack them vertically. Instead of eight memory chips spread out along a street, build a single tall apartment building with eight floors of memory in it.

Stacked chips create a new puzzle, though. How do they connect to each other? You cannot run wires between them, because there is no gap. The solution is to drill microscopic holes straight down through every chip in the stack, and fill those holes with copper. Each filled hole acts like an elevator shaft, carrying signals up and down through every floor of the building.

Engineers call these elevator shafts TSVs (Through-Silicon Vias, or "tunnels through silicon"). Signals travel vertically, floor to floor, through tiny copper elevators.

Figure 6. A vertical apartment building with elevators. Twelve floors of memory stacked on top of each other, less than a millimeter tall in total, connected by copper elevator shafts that run through every floor.

A modern stack has 12 floors. Each layer is thinner than a human hair, and the whole stack is less than a millimeter tall. Thousands of those copper elevators run through it. As an engineering achievement, it is genuinely remarkable.

This is what HBM, or High Bandwidth Memory, actually is: a 3D stack of memory chips. Every modern AI chip needs HBM towers next to its brain. SK hynix is currently the world leader at building these towers. Samsung and Micron are second and third. Without HBM, modern AI training does not work, because there would be no way to feed data to the brain chip fast enough.

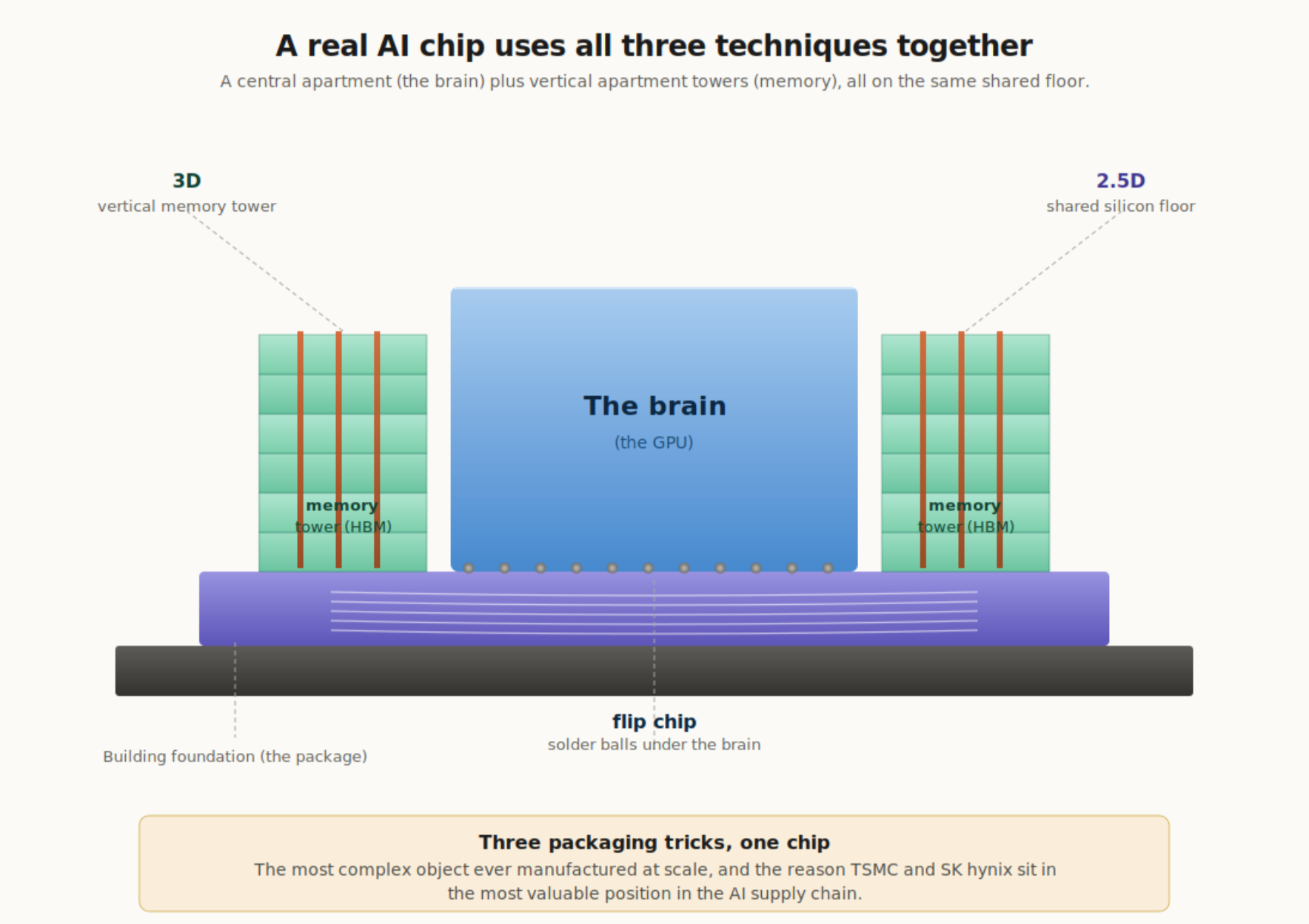

8. Putting it together: what an Nvidia AI chip really is

Now combine everything. When you read about an Nvidia AI chip, what is actually inside that single package?

It uses all three techniques at once.

- The brain chip in the middle connects to its package using flip chip. Solder balls underneath.

- The memory towers on either side are 3D stacked HBM. Vertical apartment buildings, each with 12 floors and copper elevators.

- The whole assembly sits on a shared silicon floor (the 2.5D interposer) that lets the brain talk to all the memory towers at lightning speed.

Figure 7. A real AI chip uses all three packaging techniques together. 3D vertical memory towers on the sides, a 2.5D shared silicon floor underneath them, and flip chip solder balls connecting the brain to that floor.

It is a city built using all three architectural techniques at the same time. Individual buildings (flip chip), a downtown of vertical apartment towers (3D), and a network of high-speed roads connecting everything (2.5D).

This is why people say modern AI chips are the most complex objects ever manufactured at scale, and why the companies that can do all three steps reliably (TSMC for the brain and the shared floor, SK hynix for the memory towers) are now sitting on the most valuable position in the entire AI supply chain.

9. Quick recap, in four analogies

Flip chip is like pressing a stamp face-down onto an ink pad. A neat grid of dots underneath, instead of messy wires reaching over the edges.

Fan-out is like putting a small photo in the middle of a larger frame, then drawing lines outward to a wider area. The chip is small, but the package gets enough room to fit all the connections it needs.

2.5D is like two apartments sharing the same floor, connected by a hallway packed with millions of pathways.

3D is like a vertical apartment building with an elevator, many floors stacked on top of each other and connected by copper elevator shafts running through them all.

The most advanced AI chips today combine three of these: flip chip underneath the brain, a 2.5D shared floor for the whole package, and 3D vertical towers for the memory. Fan-out lives in a different world. It dominates the smartphone in your pocket, and a panel-level version of it is now coming for AI.

That is the entire reason packaging suddenly became one of the most important parts of the chip industry. The brain chips get the headlines. None of them work without the packaging that lets all the pieces talk to each other.